Ecosystem

Making Freighter Faster: How We Improved Load Times by 63%

Author

Piyal Basu

Publishing date

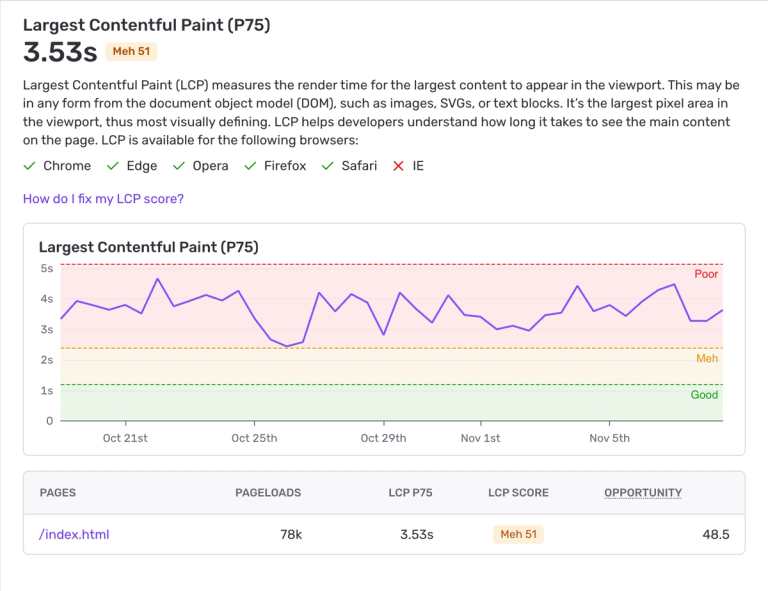

At Freighter, we’ve been obsessed with making our browser extension feel instantaneous. But after diving into real-world metrics, we discovered that initial load times were much slower than we wanted. Users were waiting over 3.5 seconds on average for their wallet to appear.

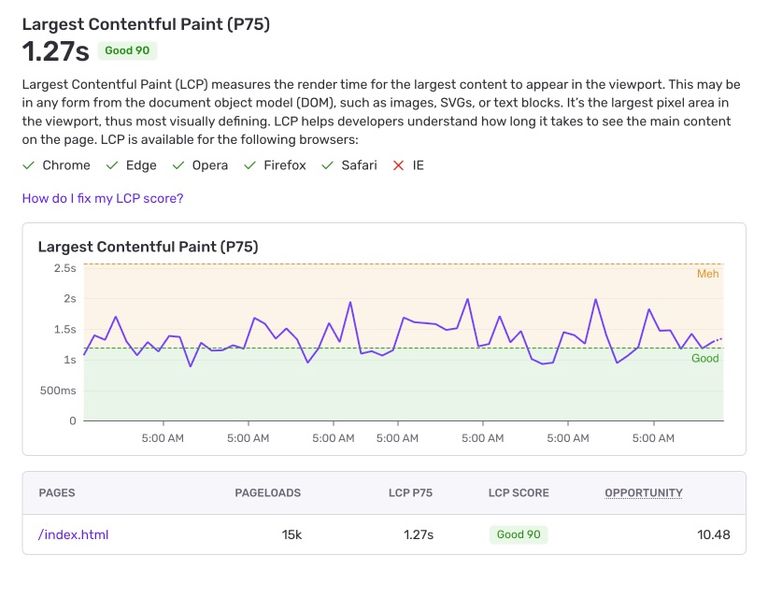

For a browser extension that users open multiple times per day, those seconds really add up and create a lot of friction for frequent users. After several weeks of auditing, profiling, and optimizing, we reduced the load time to 1.27 seconds—a 63% improvement that made the app feel dramatically faster and more responsive.

Here’s how we found our bottlenecks, the changes we made, and what we’re planning next.

What Was Wrong

Our first focus was initial page load time. Extensions behave differently than web apps; they don’t live in a tab that stays open. Users open and close them frequently throughout the day. If every open takes multiple seconds to load, the experience quickly becomes frustrating.

We measured performance using Largest Contentful Paint (LCP), gathering real-world data via our web monitoring tool Sentry’s performance monitoring. On average, users were facing an LCP of 3.5 seconds when opening the Account view. It was important to use Sentry to get a holistic view of what the average user was experiencing because in our local testing, we were seeing shorter load times. Clearly our experience wasn’t matching the average user’s.

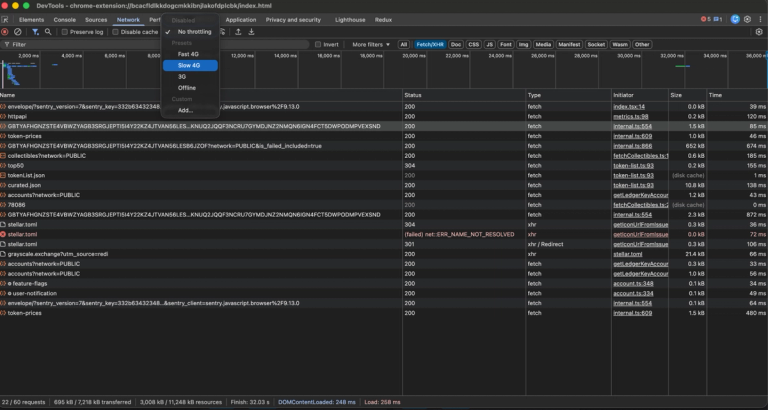

That 3.5 seconds represented the total time from page load, past the spinner, to a fully interactive state. We began by investigating what was contributing to this 3.5 seconds start up time. Using Chrome’s developer tools, we simulated different internet speeds to pinpoint what processes were taking the longest. In an effort to give users access to all of their data at once, we were waiting for numerous API’s to resolve before displaying the UI. Some of these processes, like fetching icons, took much longer than others. This was slowing us down.

And the slowdowns didn’t stop there. Each time users navigated around the app—say, from Account to History and back—we were re‑fetching data unnecessarily. Account balances that hadn’t changed were being requested over and over again. This added more delay and redundant network calls.

Our goals became clear: get below 1.5 seconds for the initial LCP and stop re-fetching data needlessly.

What We Did

Step 1: Load Only What’s Needed

First, we tackled the issue of slow load times on app startup. We were waiting for a lot of data to load from external API’s: account balances, account history, asset icons, and more. We pared this down to the bare minimum of what was needed to show the user their wallet, account balances, so users could get started as soon as possible..

Step 2: Preload Data

Everything else, we moved to a background process. We started loading the user's account history, asset lists, and asset icons asynchronously after the user’s account balances were already loaded and visible. We then cached this data. This made navigating much faster. Now, when a user navigated to History, for example, they’d already have the necessary data cached and ready to serve. They wouldn’t need to wait for an API call to resolve before rendering the view.

Step 3: Smarter Asset Icon Loading

We also revamped how we loaded asset icons. We used to follow a mulit-step process:

- Load each asset issuer’s account from Horizon and then grab that asset’s home domain from the Horizon lookup. 😐

- Load the toml file attached to this home domain. 🥴

- See if the asset issuer listed an icon, and if they did, load it. 😩

The result was 3 roundtrips for 1 small PNG file! And this process would occur for every asset a user owned. While we did cache the results of this icon url to prevent this multi hop fetch in the future, this was a painful process until we were able to cache a result.

To improve this, we started by consulting the user’s asset lists for the icon. Since these asset lists represent some of the most popular tokens in the Stellar ecosystem, we felt confident this would be sufficient for a large number of icon requests.

If that didn’t yield any results, we’d resort to the old method with one important distinction: we started using ledger entries from Stellar RPC to fetch multiple accounts at once. We’d no longer have to iterate over all the user’s assets, grabbing the Horizon account for each one in search of a home domain. Now, we could just make one request and get all the asset issuers (and their home domains) in one call.

Step 4: Cache User Data Aggressively

Speaking of caching, we started more aggressively caching user’s data. For every piece of data we loaded, we cached until the user closed the app. This made navigating throughout the app much faster as we already had most of the data needed. To help ensure we didn’t show the user stale data, if the cache lasted longer than 3 minutes, we would force a re-fetch. Also, if we were taking an action that we knew would make the cache stale (like sending a payment or adding a trustline), we would opportunistically re-fetch data.

Account History provided another avenue for caching. When processing past transactions a user has been involved in, we’re often dealing with the same information multiple times. For example, a user may be interacting with the same Soroban contract numerous times. We shouldn’t be re-fetching that data for that contract every time it appears in History. Instead, when iterating over History transactions, we should be caching data as we go so it’s immediately available for the next iteration. We accomplished this using batch fetching.

What We Found

By and large, our measures paid off.

- LCP improved from 3.5s → 1.27s, landing firmly in the “Good” performance range per Sentry’s Web Vitals guidelines.

- The interface felt dramatically snappier.

- Navigation between views became almost instantaneous, since most requests now resolved from cache or background data refreshes.

We didn’t just see this in the numbers. Within days of deployment, feedback started rolling in from users across the ecosystem—people noticed and appreciated how fast Freighter now felt.

What's Next

While it’s great to be in the green zone, there’s still work to do.

1. Moving to a Custom Data Source

Today, our backend queries Stellar’s Horizon API for ledger data. Soon, we’ll migrate to our own wallet backend, optimized specifically for wallets. By serving only the minimal data a wallet needs, our account balance API call should resolve even faster.

2. Sidepanel Mode for Persistent Access

We’re also building a sidepanel mode that lets users pin Freighter on the side of their browser. Because extensions automatically close every time you click away, this single feature has the potential to eliminate startup waits entirely for users who keep the panel open as they browse.

3. Improving Global Response Times

Breaking down Sentry data by geography revealed noticeable variances in LCP times across regions. With help from synthetic monitoring via New Relic, we identified higher network latency for our API endpoints from certain areas of the world. As a next step, we plan to deploy regional caching and servers to improve performance globally.

Wrapping Up

Optimizing Freighter wasn’t just about shaving milliseconds off load times—it was about making the app feel alive again. By rethinking our data flow, caching strategy, and API design, we turned an extension that felt sluggish into one that feels instant.

And we’re not stopping here. With a faster backend and sidepanel mode on the way, our next goal is even more ambitious: bringing Freighter’s load time under one second anywhere in the world.